HOTL Orders of Autonomous System Capability

The human never leaves the loop. The loop gets bigger

Disclaimer: this is a distillation by Opus 4.6 of thinking from a very lengthy chat w/ Opus 4.6

Order 0 is HITL. Every order above it is a new OODA loop. The human is always on one.

Every autonomous system operates in a cycle: act, observe, adjust. The question that determines a system’s capability is not “how autonomous is it” but which OODA loop is the human on?

The answer names the order.

At Order 0 the human is inside the loop — a required step. Nothing executes without them. At every order above 0 the loop runs freely. The human is not inside it. They are on it: watching, able to intervene, not required to at every iteration. The loop runs while they sleep.

Order 0 is the only true HITL order. Every order above it is a HOTL order, distinguished by which loop the human is currently on. The pattern at each transition is identical: the human hands the current loop’s OODA function to an agent and steps up to watch that agent from above. They exit by delegating. They re-enter one level up. They are always on a loop. Never on the same loop twice.

The Mechanism

Each transition works by the same mechanism: subject becomes object.

What you were embedded in — what you could only act from, not examine — becomes something you can hold and modify. Ralph executes within his prompt. He cannot observe it. The prompt is his subject. The Order 1 human watches Ralph run and edits PROMPT.md between loops. The prompt is now an object: a thing being acted on, not merely acted from. At Order 2 an agent takes over the work of watching Ralph. The human is now on a loop whose object is the Ralph run itself — the session logs, the drift patterns, the failure signatures. What was the human’s operational medium has become their observational target.

This is also why higher orders remain tractable. Prior complexity does not stack — it compresses. The entire internal workings of a loop fold into a single node at the next order. The Order 2 human does not manage Ralph’s internals. They see one thing: the Ralph run. Its internals are below their resolution. Each order stands on a floor that has already been folded up.

Backpressure is what makes a loop foldable. A loop without automated feedback cannot run unattended — it needs a human inside to evaluate its own output, which means it cannot fold into a HOTL node. A type checker frees the human from verifying syntax. A test suite frees the human from verifying behavior. Each piece of backpressure removes one reason the human had to stay inside the loop. When all those reasons are gone, the loop can fold and the human can step up.

Before building any new capability, ask: what automated feedback will tell this loop whether its output is good? Design that first. The capability follows.

An organization’s effective HOTL Order is capped by the highest order at which it has functioning backpressure.

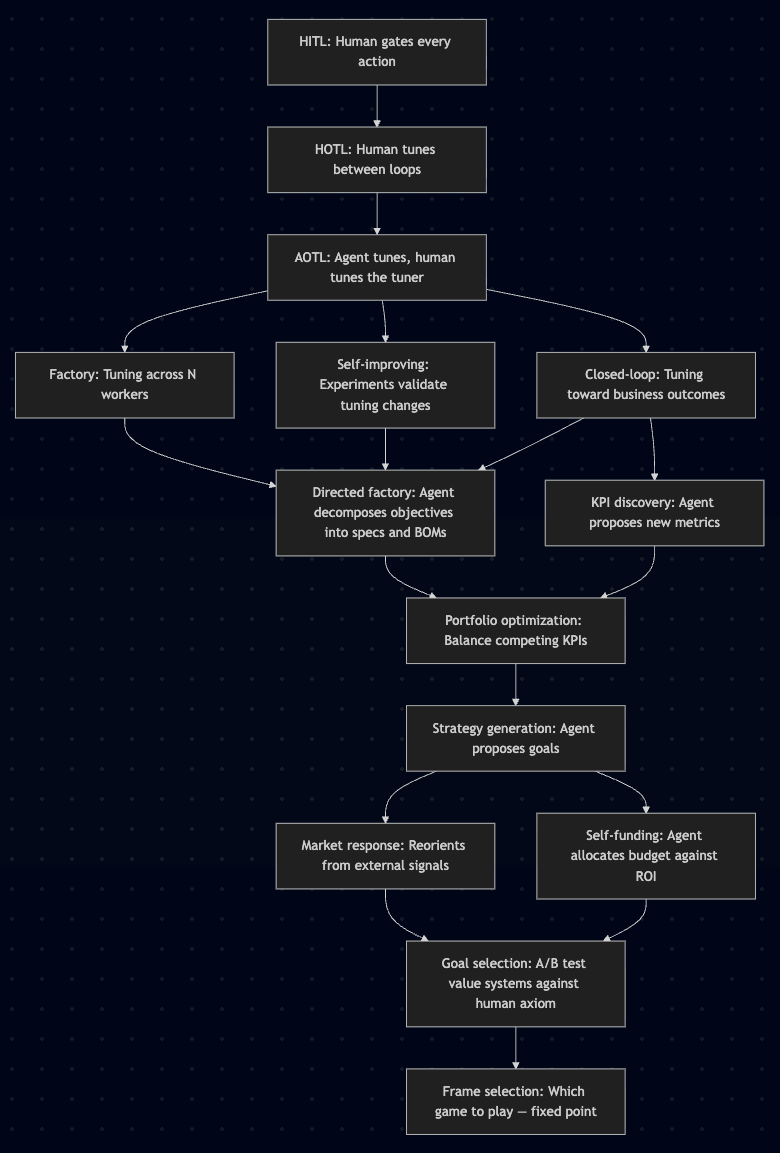

The progression between orders is not always linear. Three independent capabilities branch from Order 2 and converge at Order 4, forming a directed acyclic graph rather than a ladder:

The Orders

Order 0 — Direct Control (HITL)

The human is a required step inside the loop. Nothing executes without them. They provide all feedback — they are the backpressure.

When the human intervenes, they touch: every tool call and code change. Backpressure: none automated. You know you’re here when: nothing happens while the operator is away.

Order 1 — The Ralph Loop (HOTL: human on the work loop)

The agent executes autonomously in a continuous loop. The human is on that loop — watching output between iterations, ready to intervene. When they do, it takes the form of prompt tuning: adding constraints, resetting the workspace, adjusting the specification. Each time the agent does something bad, the prompt gets tuned — like a guitar.

This is Geoffrey Huntley’s Ralph Wiggum technique: while :; do cat PROMPT.md | claude-code; done. The prompt is now object. The human is shaping the environment the loop acts within, not acting inside it.

The human’s OODA at this order: observe Ralph’s output, orient to failure patterns, decide on prompt adjustments, act on PROMPT.md.

When the human intervenes, they tune: prompt constraints, specifications, workspace state. Backpressure: build system, type checker, test suite, linter — anything that catches failures before the human sees them. You know you’re here when: the operator’s primary activity is editing the prompt. Work continues while the operator is away.

Order 2 — Agent Tuning (HOTL: human on the Ralph-watching loop)

An agent takes over the Order 1 OODA cycle. It watches the Ralph run — session logs, plan drift, rework patterns — and proposes the tuning a human would perform at Order 1. The human is no longer on the Ralph loop. They are on the loop that watches the agent watching Ralph.

The Ralph run has compressed into a node. What the human sees is a synthesis of it — failure signatures, proposed interventions. The loop’s internals are below their resolution.

The human’s OODA at this order: observe the tuning agent’s recommendations, orient to whether they are sound, decide to apply or reject, act.

When the human intervenes, they act on: tuning recommendations from the tuning agent. Backpressure: measurable tuning impact — did rework rate drop? Did convergence improve? Without this, the human is gut-checking recommendations, which is Order 1 with extra steps. You know you’re here when: the operator receives “worker meandered for 3 loops on permissions — recommend adding a scoping constraint” and says “yes, apply that.”

Order 3a — Factory (HOTL: human on the fleet-tuning loop)

The tuning agent applies learnings across N parallel workers. A constraint erected from one worker’s failure propagates to all active workers. Fleet breadth has become object — one worker’s failure pattern was just what happened to that run. Across N workers it becomes a visible, addressable pattern.

When the human intervenes, they act on: fleet-wide tuning changes. Backpressure: per-worker backpressure aggregated across the fleet — pass rates, failure distributions, convergence patterns. You know you’re here when: a prompt change from one worker’s failure immediately affects all workers.

Order 3b — Self-Improving (HOTL: human on the tuning-validation loop)

The tuning agent runs controlled experiments to validate its own changes. “This constraint reduced meandering in 8 of 10 test loops.” The validity of tuning decisions has become object — not just “here is a recommendation” but “here is evidence that recommendations work.”

When the human intervenes, they act on: experimental evidence about tuning effectiveness. Backpressure: A/B results with statistical validity. This order IS backpressure on the tuning process itself. You know you’re here when: the system produces A/B results about its own tuning decisions.

Order 3c — Closed-Loop (HOTL: human on the outcome loop)

The tuning signal shifts from agent artifacts to business outcomes. Not “did the agent meander” but “did the deliverable pass validation” or “was the output accepted without rework.” The target of the whole stack has become object — the system is now measuring whether it is pointed at the right thing.

When the human intervenes, they act on: which outcome metrics the system tunes against. Backpressure: business outcome measurement — deployment success, acceptance rates, defect rates. The most powerful backpressure because it is the least gameable. You know you’re here when: the system references metrics from outside its own loop.

Orders 3a, 3b, and 3c are three independent subject-to-object transitions, not steps in a sequence. Each makes a different aspect of Order 2 practice examinable: breadth (can patterns be seen across many workers?), rigor (can tuning decisions be validated?), and ground truth (is the system measuring the right thing?). Each delivers value independently. All three must be compressed nodes before Order 4 is safe — decomposing objectives into autonomous work requires confidence in all three simultaneously. Any one missing and the decomposition is being approved on faith, not evidence.

Order 4 — Directed Factory (HOTL: human on the objective-decomposition loop)

The system receives objectives, not specifications. An objective becomes a specification, a materials manifest, worker configurations, and a validation suite — all system-generated. The factory is directed by outcomes rather than instructions. Requires 3a, 3b, and 3c as compressed nodes.

The human’s OODA at this order: observe the system’s decomposition of an objective, orient to whether it is sound, decide to approve or redirect.

When the human intervenes, they act on: how the system decomposed an objective into executable work. Backpressure: worker success and failure as transitive validation. If workers succeed against their own backpressure, the decomposition was sound. You know you’re here when: the operator provides a one-sentence objective and reviews a generated specification, materials list, and validation suite they did not author.

Order 5 — KPI Discovery (HOTL: human on the metric-proposal loop)

The system notices patterns in its own outcome data the operator hasn’t asked about. “Deliverables with explicit interface descriptions have 40% fewer review comments. Recommend tracking description completeness.”

When the human intervenes, they act on: what gets measured. Backpressure: evidence that proposed metrics correlate with outcomes the operator already cares about. You know you’re here when: a metric appears that the operator didn’t define, with evidence for why it matters.

Order 6 — Portfolio Optimization (HOTL: human on the resource-allocation loop)

The system manages tradeoffs across multiple objectives. “Task type A converges in 3 loops, type B in 12. Reallocating accordingly.”

When the human intervenes, they act on: how resources distribute across competing priorities. Backpressure: ROI measurement per workload — cost, throughput, and quality per unit of resource. You know you’re here when: a resource allocation table exists that the system authored and executes against.

Order 7 — Strategy Generation (HOTL: human on the roadmap loop)

The system proposes changes to what the factory produces. “Approach X produces fewer defects than Y for this problem class. Recommend shifting production.”

When the human intervenes, they act on: what the system should build, not how it builds. Backpressure: historical pattern evidence across multiple runs with measurable confidence. You know you’re here when: the system’s output includes recommendations that would change the roadmap.

Order 8a — Market Response (HOTL: human on the environmental-model loop)

The system detects external changes — new platform capabilities, regulatory shifts, competitive moves — and proposes reorientation.

When the human intervenes, they act on: the system’s interpretation of external signals and proposed response. Backpressure: ground truth validation — did the detected change actually occur? Did the proposed response address it? You know you’re here when: the system flags an environmental change the operator didn’t know about, with a proposed adaptation.

Order 8b — Self-Funding (HOTL: human on the budget loop)

The system proposes resource allocation against measured returns, scaling up positive-ROI work and shutting down negative-ROI work.

When the human intervenes, they act on: spending rationale within a fixed envelope. Backpressure: financial outcome tracking — did the spend produce the projected return? You know you’re here when: resource consumption varies period to period and every variance comes with ROI justification.

Order 9 — Goal Selection (HOTL: human on the value-function loop)

The system runs parallel instances under different optimization targets and presents comparative results against an operator-provided axiom.

When the human intervenes, they act on: which definition of success to operate under. Backpressure: axiom-relative measurement — which value system produced better outcomes as judged by the operator’s stated axiom? You know you’re here when: the system presents results under competing objective functions and recommends one.

Order 10 — Frame Selection (HOTL: human on the frame loop)

The system evaluates which problem space to inhabit — including the null option of not acting. Frame selection applied to itself produces frame selection. The recursion reaches a fixed point.

When the human intervenes, they act on: which game the system plays. Backpressure: none available. Frame selection cannot be validated without a meta-frame, which is another frame. The human either has conviction about which game to play or doesn’t. You know you’re here when: you can’t tell. Any observable behavior is indistinguishable from a lower order executing within a chosen frame. The decision to remain in the current frame looks identical to never having considered alternatives. The fixed point is silent.

Backpressure as the Enabling Mechanism

Backpressure is not monitoring. It is what converts a loop that requires a human inside it into a loop that can fold into a HOTL node. Without it the human cannot step up. The architecture diagram may claim Order 4. The human’s attention is consumed at Order 1.

Backpressure Speed

Backpressure must be fast relative to the loop it serves. A type checker running in milliseconds is excellent backpressure for a code generation loop iterating in minutes. A production defect rate taking two weeks to stabilize is useless for the same loop — but appropriate for a monthly portfolio cycle. Mismatched tempo is the most common failure mode and the hardest to see: the loop iterates confidently while waiting for a signal that arrives too late to correct anything.

OrderTypical loopBackpressure speed0SecondsInstant (human reaction time)1Minutes–hoursSeconds (build, test, lint)2Hours–daysMinutes (artifact analysis)3a–3cHours–daysMinutes–hours (aggregation, A/B, outcomes)4Days–weeksHours (worker completion)5–6Weeks–monthsDays (correlation, ROI)7–9Months–quartersWeeks (strategic measurement)10UndefinedUndefined

Reversibility

Backpressure works by letting the loop fail, detecting it, and correcting. This holds only when failure is survivable. Reversible actions — two-way doors — can be fully governed by automated backpressure. Fail, detect, correct. Irreversible ones can’t: the signal arrives after a door that no longer opens. The practical implication is routing, not human gatekeeping. Irreversible actions need pre-execution intervention. Reversible actions flow through the standard backpressure loop. The distinction is a design constraint, not a reason to keep humans inside the loop.

The Backpressure Ceiling

Building autonomous systems is not primarily a model problem or a tooling problem. It is a backpressure engineering problem. The ceiling is not how intelligent the agent is. It is how fast and reliably the loop can be told whether the work is good.

An organization does not need top-level signal to have a functioning system. A single workflow with solid backpressure and a tuning agent compounds within its own ceiling regardless of whether it connects to anything above. The risk of missing higher-order signal is not that local loops fail to work. It is that they work efficiently toward something subtly misaligned with what you ultimately care about. Build local loops first. Wire them to the highest available real signal. Extend upward as the system matures.

Reflections

On the naming. The original version of this framework was called HITL Orders. That name embeds the wrong model. HITL — Human In The Loop — means the loop requires the human as a step. That is only true at Order 0. Every order above it is a fully autonomous loop the human is on. Intervention is not a structural gate. It is an act from the HOTL stance. The loop does not wait for it. These are HOTL Orders.

On the structure. Each order is an OODA loop whose object is the previous order’s loop. The previous loop has compressed — its internal complexity is below the current order’s resolution. The Order 2 human is not managing a Ralph loop. They are watching a synthesis of one. This is why higher orders are tractable: the human is never holding accumulated complexity. They are holding a node that contains it.

On the human. At each transition the human exits a loop by handing its OODA function to an agent, then steps up to watch that agent. They are always on a loop. Never on the same loop twice. At Order 0 this is obvious. At Order 10 it is nominal — the approval surface is so abstract that meaningful oversight becomes an open question. Every organization must decide which order represents its ceiling of responsible operation. The framework does not answer that question. It makes the question legible.

On the branches. Orders 3a, 3b, and 3c represent three independent subject-to-object transitions on three different aspects of Order 2 practice: what is visible (fleet breadth), what is validated (tuning rigor), and what is true (outcome ground truth). Each can be pursued independently and delivers value on its own. The convergence at Order 4 is not architectural preference — it reflects the minimum: all three must already be folded before objective decomposition can be safely approved.

On practical application. Most engineering organizations operate between Orders 0 and 1. The compound value begins at Orders 2 and 3, where tuning becomes systematic rather than artisanal. Order 4 — where the system decomposes objectives into work — is where autonomous systems begin to feel like infrastructure rather than tools. Everything beyond Order 6 is, for now, a research direction rather than an engineering practice.

The first step is not to build a higher-order system. The first step is to audit your backpressure. Whatever order you aspire to: what automated feedback exists at that order? If the answer is “the human checks,” you have found your actual ceiling. Build the backpressure. The loop folds. The order follows.

References

Geoffrey Huntley, “Ralph Wiggum as a software engineer”

Moss, “Don’t waste your back pressure”

Robert Kegan, The Evolving Self